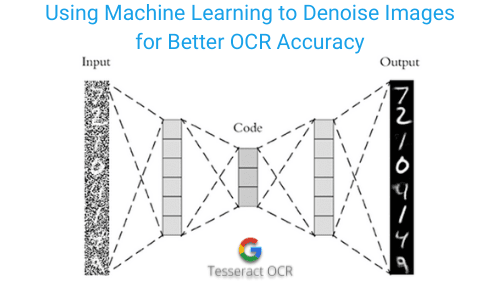

1) the feature-level prior is to learn domain-invariant features for corrupted images with different level noise 2) the pixel-level prior is used to push the denoised images to the natural image manifold. We tackle the domain alignment on two levels. In this paper, we propose an effective image denoising method by learning two image priors from the perspective of domain alignment. Image denoising is the process of removing noise from noisy images, which is an image domain transferring task, i.e., from a single or several noise level domains to a photo-realistic domain. Furthermore, the performance of FDN surpasses that of previously published methods in real image denoising with fewer parameters and faster speed. Experimental results demonstrate FDN's capacity to remove synthetic additive white Gaussian noise (AWGN) on both category-specific and remote sensing images. FDN learns the distribution of noisy images, which is different from the previous CNN based discriminative mapping. Following this framework, we present an invertible denoising network, FDN, without any assumptions on either clean or noise distributions, as well as a distribution disentanglement method. This paper also provides a distribution learning based denoising framework. Since the noisy image distribution can be viewed as a joint distribution of clean images and noise, the denoised images can be obtained via manipulating the latent representations to the clean counterpart. This paper proposes a new perspective to treat image denoising as a distribution learning and disentangling task. However, these methods may ignore the underlying distribution of clean images, inducing distortions or artifacts in denoising results. The prevalent convolutional neural network (CNN) based image denoising methods extract features of images to restore the clean ground truth, achieving high denoising accuracy. In results, we show the denoiser trained with our GAN2GAN, solely based on single noisy images, achieves an impressive denoising performance, almost approaching the performance of the standard discriminatively-trained or N2N-trained models that have more information than ours, and significantly outperforming the recent baselines for the same setting.

Our method consists of three parts: extracting smooth noisy patches to learn the noise distribution in the given images, training a generative model to synthesize the noisy image pairs, and devising an iterative N2N training of a denoiser. To that end, we propose GAN2GAN (Generated-Artificial-Noise to Generated-Artificial-Noise) method that can first learn to generate synthetic noisy image pairs that simulate independent realizations of the noise in the given images, then carry out the N2N training of a denoiser with those synthetically generated noisy image pairs. In such a setting, which often occurs in practice, it is not possible to train a denoiser with the standard discriminative training or with the recently developed Noise2Noise (N2N) training the former requires the underlying clean image for the given noisy image, and the latter requires two independently realized noisy image pair for a clean image. We tackle a challenging blind image denoising problem, in which only single noisy images are available for training a denoiser and no information about noise is known, except for it being zero-mean, additive, and independent of the clean image. Our extensive experiments demonstrate that our DnCNN model can not only exhibit high effectiveness in several general image denoising tasks, but also be efficiently implemented by benefiting from GPU computing.

This property motivates us to train a single DnCNN model to tackle with several general image denoising tasks such as Gaussian denoising, single image super-resolution and JPEG image deblocking. With the residual learning strategy, DnCNN implicitly removes the latent clean image in the hidden layers. Different from the existing discriminative denoising models which usually train a specific model for additive white Gaussian noise (AWGN) at a certain noise level, our DnCNN model is able to handle Gaussian denoising with unknown noise level (i.e., blind Gaussian denoising). Specifically, residual learning and batch normalization are utilized to speed up the training process as well as boost the denoising performance. In this paper, we take one step forward by investigating the construction of feed-forward denoising convolutional neural networks (DnCNNs) to embrace the progress in very deep architecture, learning algorithm, and regularization method into image denoising. Discriminative model learning for image denoising has been recently attracting considerable attentions due to its favorable denoising performance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed